The Society of Automotive Engineers (SAE International) defines five levels of vehicle autonomy, and a good deal of work is already being done in the lower levels, which include Level 1 (automation with driver assistance), Level 2 (partial driving automation) and Level 3 (conditional driving automation). Higher levels of autonomy include Level 4, which is fully autonomous in almost every driving situation except for extreme off-road conditions, while Level 5 is fully autonomous in all conditions, including extreme ones.

Level 5 autonomous vehicles will likely not be seen on the roads anytime soon, but Level 4 vehicles are beginning to make an appearance in the form of public transport like the Olli shuttle. Olli uses Light Detection and Ranging (LiDAR) for its vision, as opposed to lower-level vehicles like the Tesla Autopilot, which uses forward-facing cameras and radar. While Olli operates at low speeds in urban environments, the technology used for the shuttle will at some point in the future likely transfer to individual vehicles that can operate at higher speeds.

When imagining the autonomous vehicles of the future, the average person likely won’t think of all the various scenarios that affect autonomous performance. For example, a wet, icy or dirty road doesn’t affect human vision, but it does affect the “vision” of a car that relies on external sensors, which are exposed to the elements. Simulation software can predict how sensors will be affected by road conditions, and allow engineers to reposition them accordingly while the vehicle’s design is still in the 3D modeling stage.

Difficult road conditions are only one challenge presented in the design of autonomous vehicles. How will fog affect performance? How will a vehicle react to a pedestrian or animal running out in front of it? How do light and shadows interact with a vehicle’s vision and performance? These are all issues that can be addressed in simulation, which is much more efficient than physical testing.

When discussing the delivery of solutions that include a full HILS system and real time simulation, the addition of complex driving scenarios will be required. We have already seen some of the responses to this demand with the addition of display enhancements of rain on a windshield. We know that pooling of water can be visualized, but how will the vehicle respond?

Being able to increase vehicle fidelity will be a necessity, as will the proper simulation of hydroplaning. A vehicle also has to determine whether a road is slippery or merely wet, and needs to be able to switch from two to four wheel drive and back accordingly.

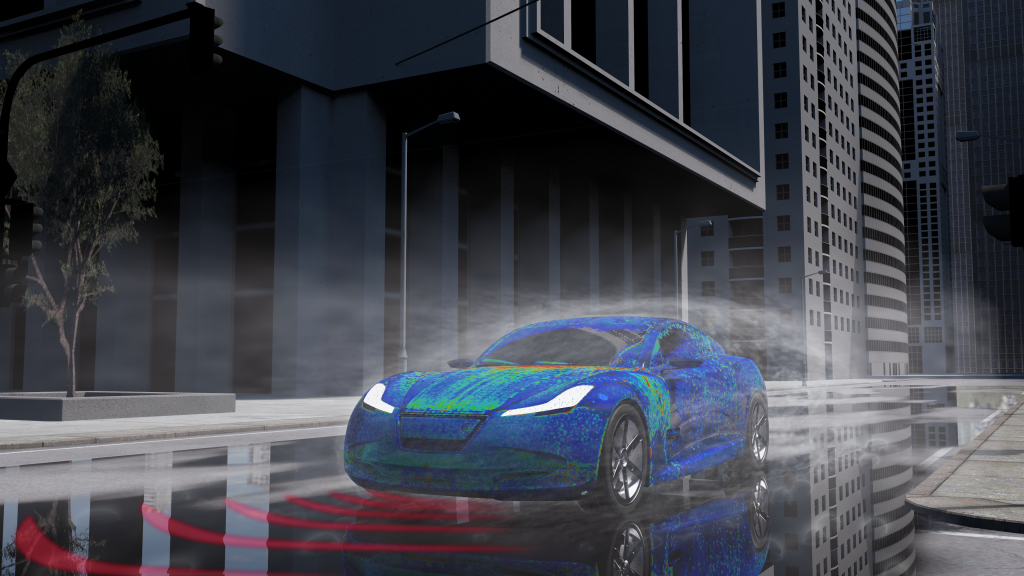

A variety of simulation solutions exist for each of these issues. Electromagnetic simulation senses the environment through electromagnetic waves, simulating radar performance. Software suites such as Dassault Systèmes’ Simpack simulate the behavior of a vehicle in real time, while PowerFLOW simulates the soiling of sensors, projecting the effects of mud and water on the vehicle and its sensors. Severe weather can be simulated through features like a digital wind tunnel, which has been shown to be just as effective, if not closer to reality, as a physical wind tunnel, but without the associated costs.

Simulation for autonomous vehicles isn’t a radical idea; GM has predicted that 95% of future AV tests will be virtual, not physical. This isn’t surprising considering the billions of miles of testing that are estimated to be needed before releasing these vehicles on the road. Manufacturers are seeing significant cost and time savings through the use of simulation, as issues that vehicles will face can be addressed in the earliest stages of pre-production.

We are well on the way to being able to support solutions that identify the correct physics for each subsystem and help the user to transfer the most important physics to real time simulation scenarios. The path to success will require a step-by-step process of increasing the level of detail for the real time simulation of autonomous vehicles.

It’s still a long way from conception to product when developing an autonomous vehicle, but the simulation solutions currently in development can greatly help to reduce time to market for creating safe AVs.

Editor’s Note: Interested in learning more about advancing future mobility? Register here for the 3DEXPERIENCE FORUM 2019, taking place May 13-16 at Caesars Palace, Las Vegas.

This article, “Simulation of the Future: Autonomous Vehicles, Part 1″, was originally published on the SIMULIA blog Reveal the World We Live In.